Oakton is closed in observance of Memorial Day on Monday, May 25.

Success matters at Oakton, and for that reason we are committed to strengthening student learning and sustaining a culture of inquiry, self-reflection, and continuous quality improvement. Oakton’s Program for the Assessment of Learning (OPAL) is a group of faculty, staff, and administrators who use learning outcome assessments to collect data on student learning in the areas of general education, transfer courses, career and technical education and co-curricular activities. Using this data, OPAL can understand better what is happening in student learning to drive positive change and improve our teaching and learning processes through:

OPAL uses the information collected to improve teaching and learning, to modify policies, and/or to reallocate resources. Assessment is ongoing, so data is constantly being collected and analyzed to make Oakton better at helping students become successful. Students are assessed continuously in courses by their instructors. These routine assessments may also be used in the college’s assessment initiatives. Course assessments also come in the form of student surveys.

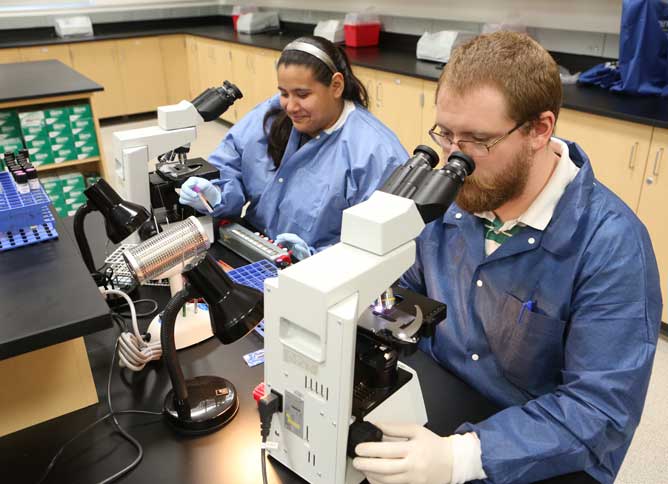

Oakton students gain a variety of learning opportunities in classrooms, laboratories, during activities, and within academic support programs. Each area is assessed by measuring learning outcomes which may be skill-specific (i.e., did the student learn to create a webpage using HTML?) or college-wide such as the General Education Learning Outcomes.

The goal of assessment is ultimately to determine if learning took place and to improve teaching and learning results. In other words: Are students learning what we think they are learning?

Assessments are used to validate and improve student learning. Below are examples of how this has improved specific Oakton programs:

Automotive

Through assessment, the automotive department found inadequate class time was given to cover brakes, steering, balancing and alignment in one course. The program split the course into two courses to increase instructional time.

Computer Network Systems

Through assessment, the CNS department discovered a benefit to administering pre-tests that gauge students' knowledge at the onset of the semester. These pre-tests allow faculty to customize the delivery of content to meet students’ needs.

Law Enforcement

Employer feedback indicated a deficit in law enforcement report writing. As a result, a stepped, report-writing instructional process was instituted. Students began writing reports at several junctures with a cumulative report-writing assignment in the final course. This instructional design change resulted in report writing that exceeded benchmark expectations.

Nursing

The nursing department measured the impact of a two-tiered, mid-curricular (MC) standardized assessment. They discovered that the second tier had no impact on success. Therefore, the second standardized assessment was removed, saving the department over $4,500 per year and both student and faculty time. There are now plans to evaluate the impact of the first tier of the MC assessment.

Learning outcomes assessment also improves student learning in transfer courses. Assessment at Oakton is a continuous process and our ultimate goal is to make our students as successful as they can be in our global community. The academic areas listed below show examples of how assessment has validated and improved student learning:

Anthropology

The social sciences department assessed multiple course learning objectives in ANT 202, Introduction to Social and Cultural Anthropology. Overall, students successfully met the criteria for success on this assessment as determined by the faculty. However, faculty did identify two areas of weakness: ethnographic fieldwork and research methods. In response, the faculty identified strategies to provide students with more ethnographic fieldwork and research experiences. Department faculty are also revising the course learning objectives and the assessment tool to improve clarity. These actions will prepare the department to reassess the course learning objectives.

Biology

The biology department conducted a three-year assessment on students’ critical thinking skills, focusing on students’ abilities to apply biology knowledge to new information and problems specific to enzymes. Students were tracked as they progressed through two or more biology classes. Faculty created actions over the three years to address results as they were analyzed. Some of the actions included, providing professional development opportunities in critical thinking and aligning course learning objectives with learning activities and exams, developing department policies specific to critical thinking pedagogical strategies, and sharing best practices among faculty. Students’ critical thinking skills improved, on average, 25 to 30 percent as students progressed through three or more biology courses.

English

The English department assessed the ability of students to analyze, evaluate, compare and synthesize source materials and use them effectively in assigned essays in EGL 102, Composition II. As the assessment was conducted, EGL faculty learned the faculty themselves did not have a common meaning or definition of the word synthesis in the course learning objective. As a result the college writing workshop group administered a survey to all EGL faculty to determine how faculty define the word synthesis. As a result of the survey responses, the college writing workshop group provided professional development to address the concept of synthesis and the relationship to students’ abilities to make text to text connections. The faculties have now agreed upon a common definition for ‘synthesis’ in the context of current theory about reading and critical reading and will be reassessing this learning objective.

Mathematics

The Mathematics department assessed the pass rates in MAT 060, Pre-algebra, over a three-year period. Of the five modules, module three, which covers fractions, seemed to be an obstacle for many students. The average passing rate for module three was 57 percent. MAT 060 was redesigned to allow students more time to master the third module. MAT 060 was reassessed after this redesign and 77 percent of students passed.

Co-curricular Assessment

Four years ago, the College began a formal assessment of co-curricular activities to learn the effectiveness of these programs, to discover how and what our students learn outside of the classroom. Leaders from the following co-curricular areas participated:

The GE assessment team worked with faculty to record and evaluate student speeches. Speech faculty developed a rubric to evaluate non-verbal communication skills, such as eye contact, body language, vocal inflection, and clarity. Student speeches were recorded across the curriculum, and the GE team evaluated the speeches using the non-verbal communication rubric. Students performed significantly low in all four areas of the rubric.

After sharing the results with the college, the GE team conducted a similar reassessment; however, this time, the faculty shared the rubric with the students in advance of their speeches. The students performed better in all four categories; however, there were still areas for improvement. Results were again shared with the faculty and a second intervention was implemented. During the reassessment, faculty shared the rubric and a video tutorial created by speech faculty and students. The tutorial focused on attire, eye contact, posture, and vocal inflection. Upon analysis of the data, the areas targeted by the video tutorial saw a 10 to 20 percent improvement. The implementation of the rubric generated excellent improvement, and continued improvement was made with the addition of the video tutorial.

Since the inception of the nonverbal communication assessment and the associated action plans, students have improved their non-verbal skills by 24 to 48 percent depending on the category. The results will be the basis for further action to improve student learning and non-verbal communication.

For the five skills where the students had viewed the tutorials, improvements in performance were dramatic, ranging from 32 to 72 percent improvement. However, there also was an improvement in areas where tutorials were not made. Results will be further analyzed to assess the need for future tutorials and action plans to address the remaining weak areas and to see if the success of the tutorials persists in a second assessment.

General education learning outcomes also are assessed throughout our Career and Technical Education (CTE) programs. A review of our CTE program learning outcomes indicates that an overwhelming number of their assessments deal with the college’s General Education Learning Outcomes.

One example of the work of assessment can be seen in the efforts of The Access and Disability Resource Center, (ADRC). The ADRC aimed to assess students’ knowledge of the learning objectives of the intake process. Students’ ability to meet the learning objectives was measured through a post-intake survey. Eighty–one percent of the students who completed the process met all of the learning objectives. In order to increase the success rate, the ADRC modified the intake process to include a separate checklist for students to follow. A second assessment was given and the checklist improved the student success rate by ten percent.

OPAL assesses courses if they meet one or more of the following criteria:

The following are examples of assessment activities: